You may need to extract the links (URLs) in a webpage for different purposes — eg., internet research, web development, security assessments, or webpage testing. This article tells you how to extract links from a webpage or HTML document in Windows.

How to Extract Links from a Webpage in Windows

There are several methods to extract URLs in a webpage. Let’s start with a native way — using your web browser’s developer tools section.

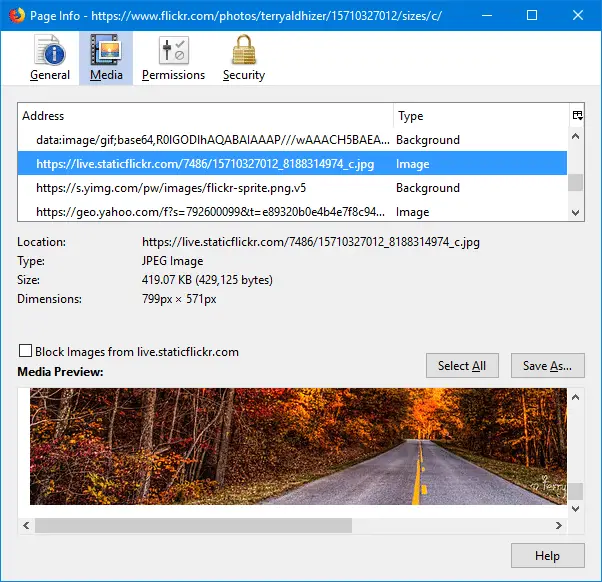

Using your Web browser’s Developer Tools

- Open Chrome for Firefox, and visit the website or webpage first.

- Press F12 to open the Developer Tools window.

- Click on the Console tab in Developer Tools.

- Clear the console output by clicking on Clear console (in Chrome) or Clear the Web console output (in Firefox) button.

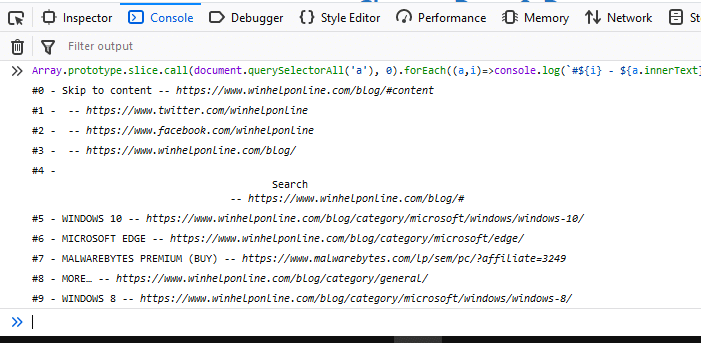

- Type the following code at the console prompt:

Array.prototype.slice.call(document.querySelectorAll('a'), 0).forEach((a,i)=>console.log(`#${i+1} - ${a.innerText} -- ${a.href}`));

This outputs the ordered list of links in that webpage along with the title in the console window.

If you only want to grab the URLs without the serial number or the title text, use this command:

urls = $$('a'); for (url in urls) console.log ( urls[url].href );

Copy the output to Notepad and save it.

Using PowerShell

Launch PowerShell and use the following command-line syntax:

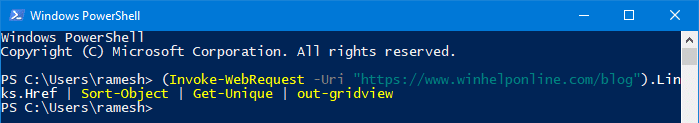

(Invoke-WebRequest -Uri "https://www.winhelponline.com/blog").Links.Href | Sort-Object | Get-Unique | out-gridview

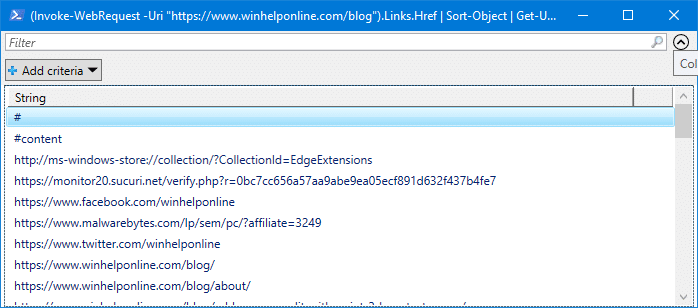

This gets the list of links in the specified webpage and outputs the list to grid view control.

Another advantage of this PowerShell command is that it sorts the entries and also removes duplicate URLs from the collection.

The grid view control lets you filter URLs key keyword search, as well as copy the listings to the clipboard using Ctrl + C

Grab title and URL

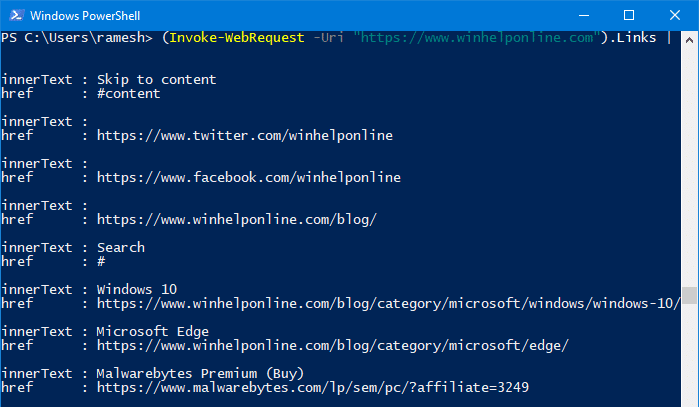

To view the innerText in addition to the corresponding links or URLs, run:

(Invoke-WebRequest -Uri "https://www.winhelponline.com").Links | sort-object href -Unique | Format-List innerText, href

You’ll get an output like this:

The duplicate URLs are removed automatically in the output.

You can even copy the output to the clipboard automatically using the | clip parameter:

(Invoke-WebRequest -Uri "https://www.winhelponline.com").Links | sort-object href -Unique | Format-List innerText, href | clip

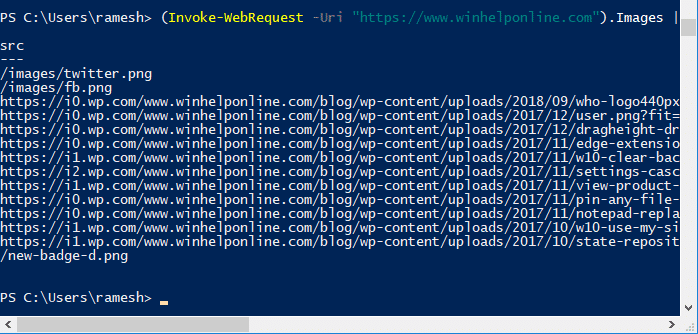

Grab Image URLs only

To extract the list of image URLs, use this syntax:

(Invoke-WebRequest -Uri "https://www.winhelponline.com").Images | Select-Object src

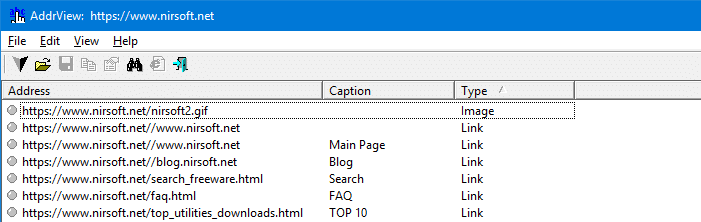

Using AddrView from Nirsoft

Nirsoft’s AddrView tool extracts the links (including image links) from a given webpage or a local HTML file automatically and lists it in a grid view.

You can even sort the results by Type and copy only the image URLs to the clipboard or save to file.

data:image links. You can copy selected items or all items to the clipboard, or save the entries to a file.

Other than the above methods, for browsers like Chrome or Firefox, there are plenty of extensions or add-ons that will grab the URL or image links from the currently active web page in your browser.

One small request: If you liked this post, please share this?

One "tiny" share from you would seriously help a lot with the growth of this blog. Some great suggestions:- Pin it!

- Share it to your favorite blog + Facebook, Reddit

- Tweet it!

Hi

Thank you for your infos.

Could you pleas show me after I found a link inside the page. for example:

((Invoke-WebRequest -Uri “https://$url”).Links.Href | Sort-Object | Get-Unique)[0]

How can I open this link in brower?

CONTROL + SHIFT + I

and go to console tab